Context is the New Currency: How Understanding Your Data Unlocks AI-Readiness

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Suspendisse varius enim in eros elementum tristique. Duis cursus, mi quis viverra ornare, eros dolor interdum nulla, ut commodo diam libero vitae erat. Aenean faucibus nibh et justo cursus id rutrum lorem imperdiet. Nunc ut sem vitae risus tristique posuere.

This article is based on a piece originally published on Modern Data 101.

Enterprises have made significant investments in data infrastructure. Pipelines run reliably, warehouses scale on demand, and dashboards refresh in near real time. The foundation looks solid. Yet as AI agents step into operational roles, a new requirement is surfacing that most data platforms were not built to meet.

AI agents do not just need access to data. They need to understand it.

A human analyst brings institutional knowledge to every query. They know what a field abbreviation means, when the data was last refreshed, and which metrics require caution. AI agents do not have that luxury. They cannot infer meaning from context that lives in someone's head or a forgotten wiki page. For an agent to act reliably and autonomously, the meaning of data must travel with the data itself.

This is the context gap. And closing it requires a different way of thinking about how data is understood, packaged, and delivered. DataOS was built to close exactly this gap, providing the context layer that enables the data infrastructure to reason with the AI agents.

Why Metadata Became the Most Important Asset You Have

Context is data on data. It is the entity that transforms a column of numbers into a business signal, a table name into a governed asset, and a metric into something an AI agent can act on with confidence.

For years, metadata was treated as a byproduct of data management. Something to be documented when time permitted, organized in a catalog, and searched when needed. That approach worked well enough when the primary consumer of data was a human analyst who could fill in the gaps.

Agentic AI changes the equation entirely. Agents are intent-driven. They pursue specific business outcomes, evaluating transactions, adjusting prices, and resolving customer issues without pausing to consult a data dictionary or ask a colleague. If context is missing, agents either stall or act on incomplete information. Neither outcome delivers on the promise of automation.

The organizations getting the most out of AI today are not necessarily the ones with the most data. They are the ones who have built the richest context layer around the data they already have.

The Three Stacks That Build a Context-Rich Data Environment

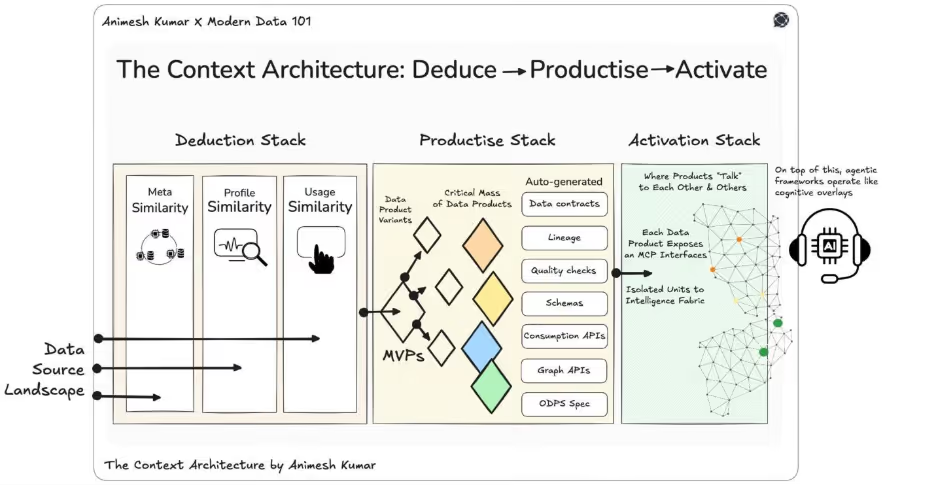

Moving from data storage to data understanding requires a structured approach. The Context Architecture organizes this shift into three connected stacks that work in sequence. Each one builds on the last, creating a closed intelligence loop across the enterprise data landscape.

The Deduction Stack: Building Intelligence from What You Have

Everything starts with understanding what you have.

Most data platforms can tell you that a table exists. A context-rich platform can tell you what that table means, how its contents behave, and who across the organization is actively using it. The Deduction Stack is how that deeper understanding gets built.

It works through three layers of similarity, each capturing a different dimension of intelligence about your data.

Meta Similarity: Understanding Structure

The first layer looks at structural resemblance across the data landscape. It examines field names, schema design, column types, table relationships, and ownership metadata across every connected source, whether that is Snowflake, Redshift, BigQuery, or an on-premises warehouse.

This layer answers the question: what does this data look like, and how does it relate to other data in the organization? It is what allows the system to recognize that a "customer_id" field in a Snowflake table and a "cust_id" field in a Redshift table are the same concept, even when the naming conventions differ across teams or systems.

Profile Similarity: Understanding Content

The second layer goes beyond structure into the data itself. It examines statistical distributions, value ranges, regex patterns, PII markers, and data quality signals across fields and tables.

Where meta similarity asks what a field is called, profile similarity asks what it contains. A field labeled "status" in one system might hold order states. The same label in another might hold customer lifecycle stages. Profile similarity distinguishes between them and identifies when two fields with different names are in fact carrying the same type of information.

This layer is also where data quality signals surface early. Anomalies, sparsity, outliers, and format inconsistencies are captured as part of the profile, so that anything built on top of this data inherits an accurate picture of its reliability.

Usage Similarity: Understanding Behavior

The third layer traces how data moves through the organization. It observes which tables are queried, by whom, how frequently, in what combinations, and for how long. It maps the behavioral fingerprint of every dataset across the data landscape.

Usage similarity reveals intent. Two tables that are consistently queried together, even if they look structurally different, are functionally related. A field that is never queried despite being well-documented is less valuable than its metadata suggests. A dataset that is accessed heavily by finance and risk teams carries a different weight than one used sporadically by a single analyst.

Together, meta, profile, and usage similarity give the deduction stack something a traditional catalog cannot provide: relational logic. Rather than indexing what exists, it reasons about what things mean and how they relate.

This is the core that DataOS builds upon. Its metadata management capabilities continuously capture and maintain all three layers throughout your entire data landscape, providing the platform with a living, evolving map of what exists, what it means, and how it connects across Snowflake, Redshift, BigQuery, and all other sources in your stack.

Intelligence as an Interface

Once the deduction stack has built this understanding, it needs to surface it in ways that different roles can act on. A Snowflake administrator sees cost and duplication insights. A data engineer sees schema drift and query performance signals. A business analyst sees which datasets are most actively used for decisions relevant to their domain.

DataOS exposes this intelligence through APIs, interfaces, and an MCP server, so that both human users and AI agents can query the understanding the system has built, not just the data underneath it.

The Productize Stack: Turning Understanding into Assets

Understanding the data landscape is valuable. Turning that understanding into deployable, governed assets is where business impact is realized.

The productize stack takes the intelligence built by the deduction stack and uses it to create data products: reusable, governed units that combine data, business context, quality guarantees, lineage, and consumption interfaces into a single deployable asset.

A data product is not a table or a pipeline with better documentation. It is a business capability.

From Intent to Deployment

When a product manager defines a unified customer profile data product, they specify the attributes it should include and the quality it must meet. The productize stack, armed with the deduction stack's understanding of the data landscape, identifies the most relevant sources across Snowflake, Redshift, or BigQuery, surfaces potential conflicts, and automatically generates the supporting scaffolding.

That scaffolding includes data contracts, lineage, quality checks, schemas, and consumption APIs, all derived from what the system already knows rather than assembled by a human.

DataOS makes this operational through its data product deployment capabilities. Teams define data products declaratively, stating intent rather than writing implementation logic. DataOS handles the orchestration underneath, so the data product that exists on paper and the one running in production are the same.

Lineage That Travels with the Data

Lineage is embedded at this stage, not bolted on afterward. Every data product in DataOS includes a complete record of where its data came from, which transformations were applied, which sources in Redshift or Snowflake it draws from, and which contracts govern its use. That lineage does not sit in a separate tool. It travels with the data product, ensuring that every downstream consumer, human or AI, is fully aware of the data's origin and trustworthiness.

Over time, as more data products are deployed, each new data product compounds the value of the last, because every data product is built on a foundation of shared understanding rather than rebuilt from scratch.

The Activate Stack: Powering the Agentic Enterprise

Data products become truly valuable when they reach the people and systems that need them.

The activate stack connects data products into a shared fabric accessible by both human teams and AI agents. Each data product exposes standard consumption interfaces, including APIs, SQL, and GraphQL. More importantly, each data product is self-describing. It communicates what it contains, how it can be queried, and what governance policies apply to it.

What Self-Describing Data Products Enable

This self-description is what makes agentic AI viable at scale. When a fraud detection agent queries a data product and receives not just a risk flag but the business definition behind it, the lineage that confirms its source in Snowflake, the quality guarantee that validates its freshness, and the governance policy that authorizes the action it is about to take, it does not need to guess. It acts with confidence.

Without this layer, agents see a column called "risk_flag" with a value of TRUE. They have no basis for deciding whether to act, escalate, or wait. With a fully activated data product, the agent understands what that signal means, where it originated from, and whether it is authorized to act on it.

Collective Intelligence Across the Ecosystem

DataOS exposes data products through an MCP interface that agentic frameworks can reason across, inferring relationships between data products and answering questions that span across multiple domains without human mediation. As data products communicate with and infer from one another, the ecosystem develops collective intelligence. Relationships that were never explicitly modeled, such as the connection between a customer record in Redshift and a transaction history in BigQuery, emerge through reasoning rather than through hard-coded joins.

The result is an environment where AI agents can operate not just in demos, but in production, because the data they rely on is trustworthy, governed, and semantically complete.

Where Catalogs Fit in This Picture

Catalogs remain valuable, but their role evolves in a context-rich environment.

Rather than sitting at the center of the data platform as the primary source of understanding, catalogs move toward the end of the pipeline. They become a search and discovery surface over context that has already been reasoned, structured, and validated by the deduction stack. Instead of indexing raw assets from Snowflake or Redshift, they surface the deduced knowledge built on top of them.

This shift makes catalogs more useful, not less. A catalog backed by a full context layer can answer questions that a static inventory never could. What data products are available for a given business domain? Which ones meet the quality bar required for an AI use case? What lineage connects this metric to its source system? These are questions a business user or an agent can ask and get a reliable answer.

DataOS makes this possible by powering the catalog with a continuously maintained, semantically enriched understanding of the data landscape, so search and discovery surface the meaning of the data, not just inventory.

Deduce, Productize, Activate

The three stacks form a complete system.

The deduction stack gives the organization a living, reasoning map of its data landscape. The productize stack turns that map into governed, deployable data products. The activate stack makes those data products accessible to every human team and AI agent that needs them.

Together, they create the conditions for AI-readiness: not just data that exists, but data that is understood, trusted, and ready to act on.

The organizations that build this foundation are not just improving their data operations. They are building the infrastructure that makes every future AI initiative faster, more reliable, and more impactful from day one.

DataOS is the platform built to make this possible. From automated metadata capture and semantic enrichment, to declarative data product deployment and an MCP interface that makes every data product accessible to AI agents, DataOS operationalizes the full Deduce → Productize → Activate cycle, so AI-readiness isn't a future state. It's something you can build toward today.

Ready to Move AI From Pilots to Production? Understanding, productizing, and activating your data is the foundation. The next step is putting it into practice. The AI-Ready Data Playbook walks through the gap between analytics-ready and AI-ready data and provides a practical roadmap to get there. Download your copy to see how organizations are making the shift. Download the Playbook