Data Trust Management Make data quality a systemic control across the bank

DataOS embeds enforceable quality, governance, and policy controls directly into data pipelines and data products so every report, model, and decision runs on trusted data.

Audit-ready lineage.

Protected risk models.

Continuous data integrity across the

entire data stack.

Why data quality breaks in large banks

Most banks still manage data quality through scattered checks, dashboards, and reconciliation processes.

Problems are often detected only after bad data has already spread across reporting systems, risk models, and analytics platforms.

When data quality controls are fragmented:

Who this solution is for

Data Trust Management is designed for large financial institutions operating complex data environments.

Chief data officers and data governance leaders

Responsible for enterprise data quality and governance frameworks.

Chief risk officers and model risk teams

Ensuring that risk models and regulatory calculations operate on trusted data.

Chief information officers and data platform leaders

Managing large data platforms and pipeline ecosystems.

Regulatory reporting and compliance leaders

Responsible for audit-ready reporting under frameworks such as BCBS 239.

How DataOS creates systemic data integrity

DataOS provides a control layer that governs the entire enterprise data lifecycle.

Instead of detecting issues after pipelines run, the platform enforces data quality, governance, and policy rules directly within the data flow.

Data is validated before it reaches downstream models, reports, and decision systems.

Operations, risk, and engineering teams all work from trusted data.

What systemic data integrity looks like

Traditional data quality tools

- Quality checks run after pipelines complete

- Issues discovered in downstream reports

- Technical checks only

- Manual reconciliation across systems

- Lineage reconstructed during audits

Data trust management with DataOS

- Quality enforcement built into pipelines

- Issues detected and blocked upstream

- Business and regulatory rules enforced

- Trusted data shared across teams

- Lineage captured automatically

Why business context matters for data quality:

In banking environments, data can pass technical checks while still violating regulatory definitions or product rules.

Data Trust Management validates data using both technical rules and business logic so risk, finance, and regulatory teams can rely on the results.

Quality checks and validation rules are embedded directly into ingestion and transformation pipelines so bad data is blocked or corrected before it spreads.

The platform monitors vendor feeds, partner integrations, and internal systems to detect schema drift or contract violations.

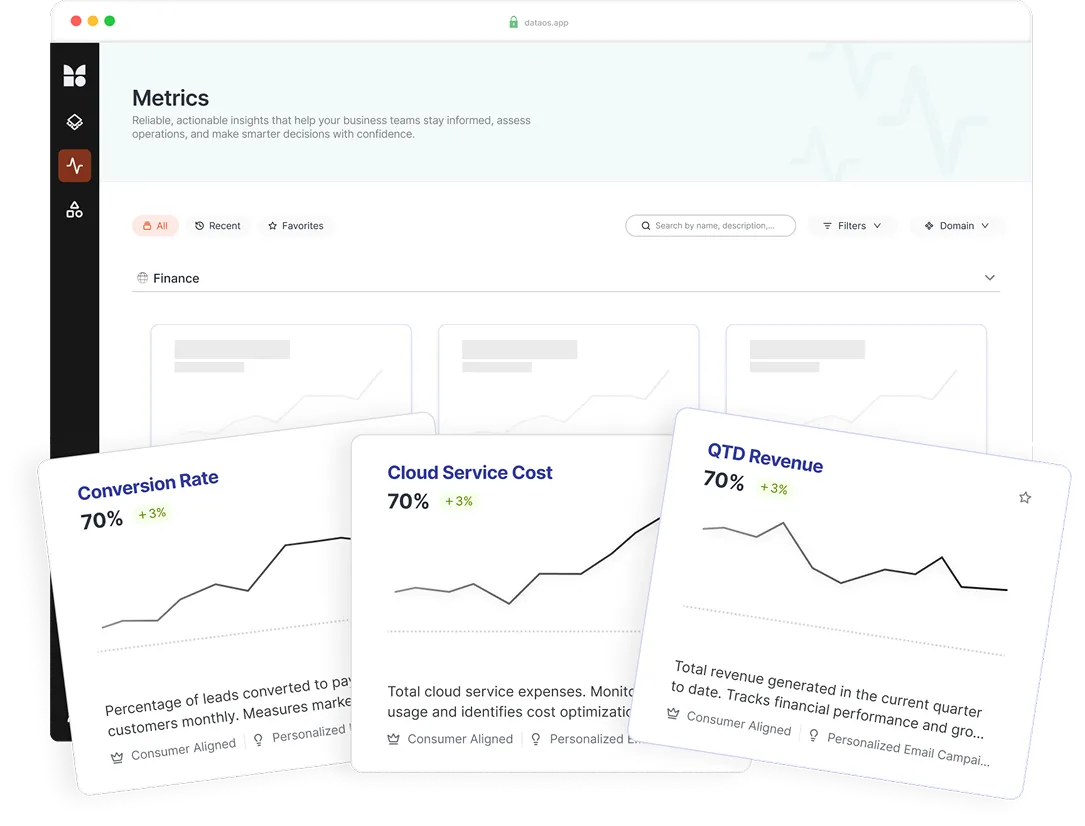

Data is packaged into governed data products with ownership, quality signals, documentation, and lifecycle controls.

Data integrity is maintained through a continuous process:

Detect issues

Evaluate policies and rules

Trigger remediation actions

Capture lineage and audit evidence

DataOS sits on top of the existing data stack and orchestrates governance, quality enforcement, metadata, and access control across systems.

Built for regulatory-grade environments

Banks require data governance that supports both operational reliability and regulatory compliance.

Proven operational impact with DataOS

Banks implementing systemic data integrity with DataOS see measurable improvements.

Frequently Asked

Questions

Data trust management enforces data quality, governance, and validation policies across the entire data lifecycle so banks can rely on their data for reporting, risk models, and analytics.

Traditional tools detect issues after pipelines run. DataOS embeds enforcement policies directly into pipelines so bad data is prevented from propagating.

The platform automatically captures lineage, governance metadata, and enforcement evidence required for regulatory frameworks such as BCBS 239.

Yes. DataOS operates as a control layer on top of existing data warehouses, pipelines, and tools without replacing them.

Continuous monitoring detects schema drift or vendor data changes before they affect model inputs.

Banks can begin enforcing systemic data integrity within weeks by deploying DataOS across priority data domains.

Yes. AI systems depend on trusted training and inference data. DataOS ensures those systems operate on validated and governed data products.